When AI Makes Poor Documentation Look Correct

AI assistants can find documentation faster than ever. But they cannot tell whether that documentation is still correct.

The Confidence Problem

Recently I had a conversation with a friend and former colleague about AI being used inside workplace documentation. His company relies heavily on Atlassian Confluence to store internal processes, guides, and operational knowledge.

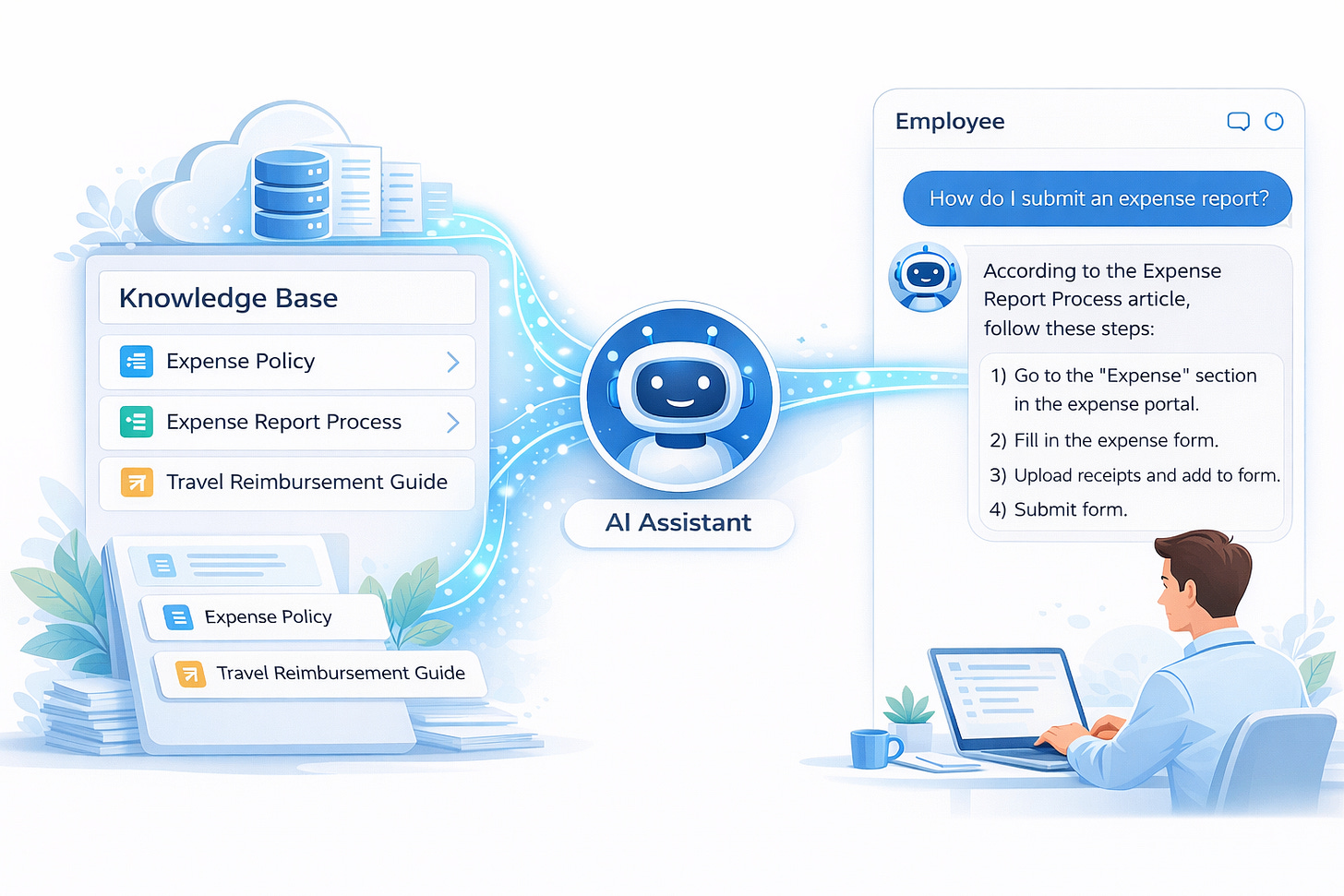

They had recently started using AI features that allow co-workers to ask questions and receive answers generated from the documentation in the system. Instead of searching through several pages and trying to piece together the right information, someone can simply ask a question and receive a summarized response.

During our conversation he told me something that surprised him, the AI assistant was returning answers that were clearly incorrect.

The responses themselves read perfectly fine. They were clear, well written, and structured like straightforward explanations. Anyone reading the answer who did not already know the correct process would have no reason to question it. The AI assistant presented the response as a factual answer.

The problem was that the information behind that answer was not always correct. In some cases it came from documentation that was outdated or no longer reflected the current way of working.

For someone who already understood the process, the mistake was easy to see. For someone simply trying to learn how something works, the answer would look completely trustworthy.

That is where the real challenge begins. When incorrect information is delivered in a confident and well-written way, it becomes much harder to recognize that something is wrong. How would a new user ever know the difference?

The Knowledge Behind the Answer

What stood out to me in that conversation was that the issue was not really the AI assistant itself. The real issue was the documentation behind it.

Most organizations build documentation over many years. Processes change, systems change or are replaced, organizational changes may move people around or out, and project teams move on to new work. Pages that were once accurate slowly become outdated. Sometimes they are updated in one place but not another. Sometimes the same process gets documented multiple times in slightly different ways.

Over time a knowledge base grows, but not always in a clean or consistent way.

Before AI, this mostly created inconvenience. If someone searched for information, they might find several different pages and have to read through them to figure out which one was most current. It took time, but the process forced people to interpret what they were reading.

AI changes that experience. Instead of reading multiple pages, someone asks a question and receives a single answer. The system looks across the documentation and generates what it believes is the best response.

The AI does not truly understand which document represents the current truth. It simply works with the information it can access.

If the knowledge base contains outdated or conflicting material, the AI can still produce an answer that sounds complete and convincing. It could also depend on the predefined prompts the AI assistant is configured to use.

It brings us back to one of the oldest sayings in computing: garbage in, garbage out.

The difference now is that the output can look very clear and convincing.

The Archiving Assumption

Another part of our conversation stayed with me. My colleague mentioned that some of the documentation influencing the answers had already been archived. At least, that was the assumption.

In systems like Confluence, archiving usually moves a page out of the main content tree and removes it from normal search results. But the content itself still exists in the system unless it is deleted. In some situations, such as links, indexing behavior, or search inconsistencies, archived content can still appear or remain accessible to systems reading the underlying data.

From the perspective of the person maintaining the documentation, the page feels like it has been removed. From the perspective of the system analyzing the knowledge base, the information may still be there.

For the employee asking a question, none of that background is visible. They simply see an answer that appears clear and confident. Would you even know if the response was correct or not?

If the answer is presented as a fact, there is little reason for someone to question whether it came from documentation that no longer reflects the current way of working.

Where Humans Still Matter

None of this means AI should be avoided in workplace knowledge systems.

Tools that help search and summarize documentation can be extremely useful. They reduce the time people spend digging through pages and help surface information that might otherwise stay hidden.

What changes is where human effort needs to go.

Instead of spending time searching for information, the more important work becomes maintaining the quality of the knowledge itself.

That starts with ownership. Important documentation should have someone responsible for reviewing it and keeping it accurate over time. Without clear ownership, outdated pages slowly build up and systems that rely on them will continue to use them.

Once an AI assistant starts answering questions, it can also help reveal problems in the documentation. One useful practice is asking documentation owners to occasionally test the system the same way an employee would. Instead of reading the page directly, they can ask the AI assistant questions about their own documentation and see what answer comes back. This allows them to see what users actually experience and catch situations where the system summarizes something incorrectly or pulls from outdated material.

AI can also help maintain the knowledge base itself. Organizations could use it to monitor documentation and flag content that has not been updated for a long time. Instead of aging quietly in the background, those pages could trigger reminders for a human review.

In this way, AI becomes part of the maintenance process, not just the interface for answers.

The important part is that the final responsibility still sits with people. AI can help surface information, highlight potential issues, and even suggest improvements. But someone still needs to decide whether the content reflects how the organization actually works today.

That is where the idea of a human in the loop becomes practical. AI can deliver knowledge faster than people could search for it themselves, but humans still need to confirm that the knowledge behind those answers is correct.

A Small Shift in Responsibility

What stands out to me about this shift is that AI does not remove the responsibility for maintaining documentation. In many ways it makes that responsibility more visible.

Before AI, messy documentation mostly slowed people down. Someone might spend extra time searching, ask a colleague for help, or eventually find the right page after reading several others.

Now the system often provides an answer immediately. That speed is helpful, but it also means the quality of the knowledge behind the system matters even more.

AI can connect information, summarize documents, and surface answers in seconds. What it cannot do is decide whether that information still reflects how the organization actually works.

That part still belongs to people.

If AI becomes the layer between employees and the knowledge they rely on, then the quality of that knowledge becomes part of the organization’s infrastructure.

It is no longer just documentation, it becomes part of how decisions are made.

And when outdated information is presented with confidence, it can look correct to anyone who doesn’t already know the difference.